Research in Action

Research Brief -

Research Brief -

AI tools are arriving in schools faster than research can evaluate them. Teachers are experimenting with new tools and districts are writing policies, all while students are already using AI both inside and outside the classroom.

But for many education leaders, a basic question remains: What does rigorous research actually say about how AI affects teaching and learning?

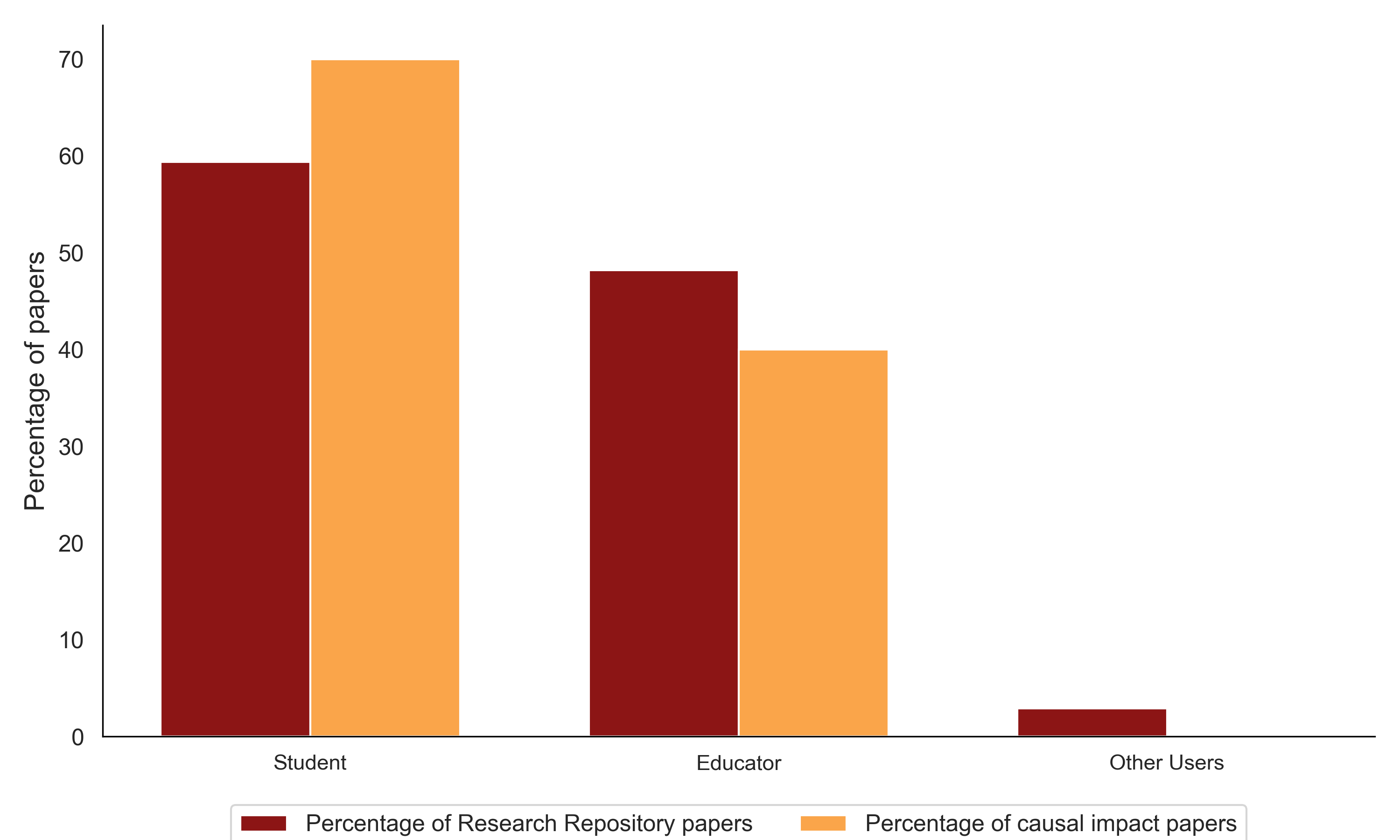

To help answer that question, we released a new report: The Evidence Base on AI in K-12: A 2026 Review. The report reviews the current research, focusing specifically on studies that convincingly estimate causal impact, meaning studies that can tell us whether an AI tool changed outcomes for students or educators.

News and Announcements -

Education is one of AI’s most promising frontiers. With tools like ChatGPT, personalized learning support can be available to any student, anywhere, at any time.

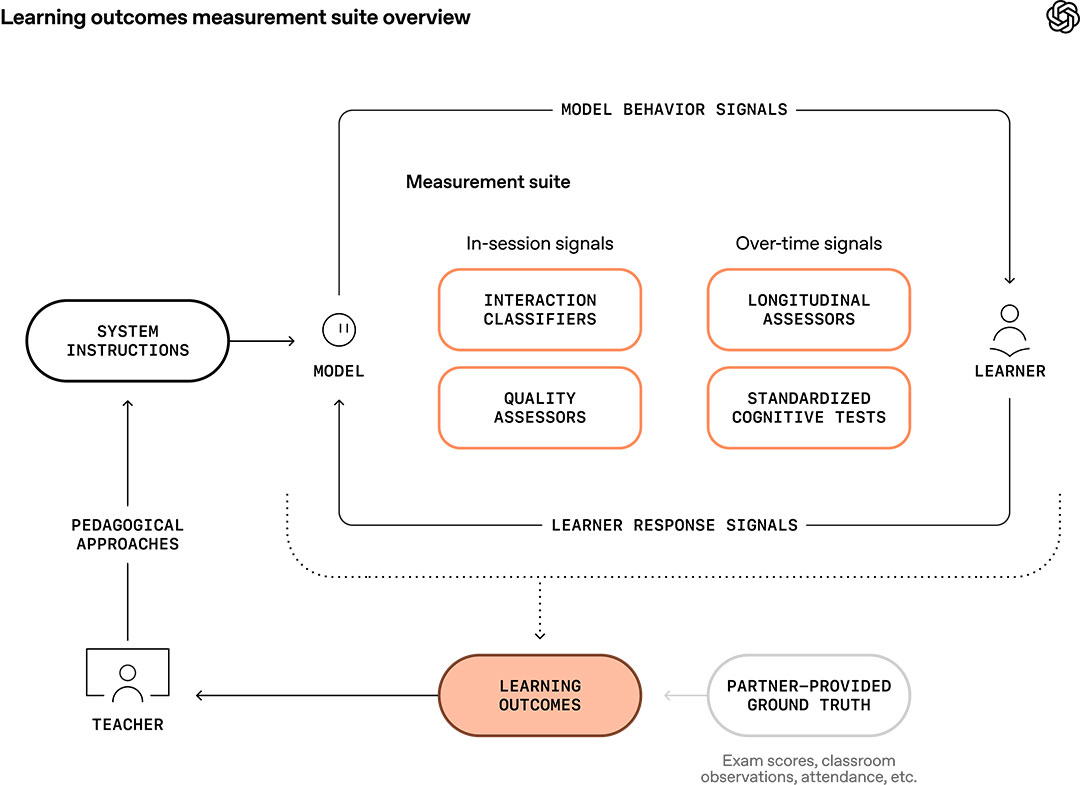

But the education sector is still early in its understanding of the impact of AI on learning outcomes. Last year, our team set out to study the use of tools like study mode and found promising gains in student performance. But our research also raised an important question: how can we assess how AI influences a learner's progress over time, not just on a final exam?

This is a broader ecosystem challenge. To-date, most research methods focus on narrow performance signals—such as test scores—and lack the ability to assess how students actually learn with AI in real-world settings, and how that use shapes outcomes over time.

Research Brief -

News and Announcements -

A new collaboration between Stanford’s SCALE and OpenAI, the creator of ChatGPT, strives to better understand how students and teachers use the popular AI platform and how it impacts learning

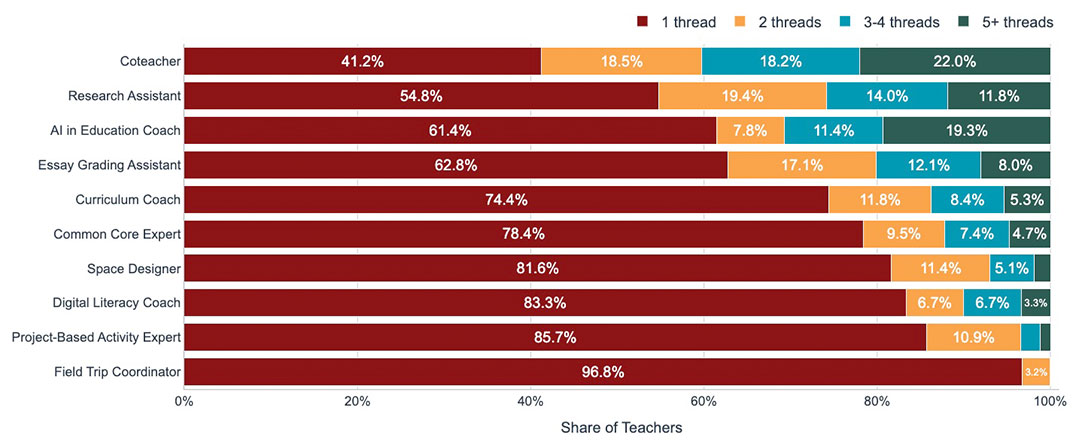

Education is one of the fastest-growing use cases of AI products. Students log on for writing assistance, brainstorming, image creation, and more. Teachers tap into tools like attendance trackers, get curriculum support to design learning materials, and much more.

Yet despite the rapid growth – and potential – a substantial gap remains in knowledge about the efficacy of these tools to support learning.

A new research project from the Generative AI for Education Hub at SCALE, an initiative of the Stanford Accelerator for Learning, aims to help fill that gap by studying how ChatGPT is used in K-12 education. In particular, the research will examine how secondary level teachers and students use ChatGPT.

Research Brief -